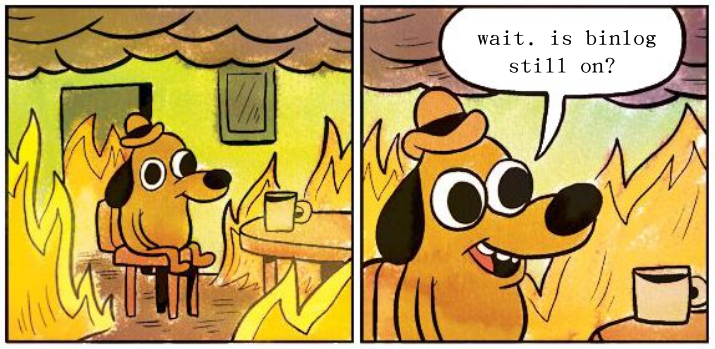

a week ago, i ran this db insert-heavy script on a server and forgot to turn off mysql‘s binlog feature first. the result, of course, is that i filled up the disk in about three minutes and brought the whole server down. not great for a tuesday. fortunately, finding and fixing the problem was straightforward. the downtime was only a couple of minutes.

in this post we’re going to go over inspecting our disk space; figuring out how much we have left and finding out what we spent all those blocks on. we’ll look at three basic tools:

df for inspecting disk space

du for getting directory sizes

find for finding files to delete these all come pre-packaged with your linux or linux-like operating system, so put that apt back in your pocket.

using df to find out how much space you actually have

df stands for “disk free” and, as the name implies, it tells you how much free space you have on your disk (plus some other stuff). let’s look:

$ df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/root 24199616 22983688 1199544 96% /

tmpfs 1010020 0 1010020 0% /dev/shm

tmpfs 404012 916 403096 1% /run

tmpfs 5120 0 5120 0% /run/lock

/dev/xvda15 106858 6165 100693 6% /boot/efi

/dev/xvdf 102626232 68248896 29118072 71% /var/backups

/dev/xvdg 61611820 5569548 52880160 10% /var/www/html

tmpfs 202004 4 202000 1% /run/user/1000

this is useful data. kinda. it shows all the partitions we have and how big they are and how much is available, and it even gives us a ‘percent used’ column, but those numbers are all in blocks. if we want something more human-readable, we can apply the -h switch which stands for, conveniently, ‘human readable’:

$ df -h

Filesystem Size Used Avail Use% Mounted on

/dev/root 24G 22G 1.2G 96% /

tmpfs 987M 0 987M 0% /dev/shm

tmpfs 395M 916K 394M 1% /run

tmpfs 5.0M 0 5.0M 0% /run/lock

/dev/xvda15 105M 6.1M 99M 6% /boot/efi

/dev/xvdf 98G 66G 28G 71% /var/backups

/dev/xvdg 59G 5.4G 51G 10% /var/www/html

tmpfs 198M 4.0K 198M 1% /run/user/1000

now we can easily see that our root partition disk is 22 gigs and we have 1.2 gigs available. much better.

filtering df output

of course there’s still the issue of all those tmpfs entries. we don’t really care about them. fortunately, we can limit df‘s output to just ext4 partitions with the --type=<filesystem> argument:

$ df -h --type=ext4

Filesystem Size Used Avail Use% Mounted on

/dev/root 24G 22G 1.2G 96% /

/dev/xvdf 98G 66G 28G 71% /var/backups

/dev/xvdg 59G 5.4G 51G 10% /var/www/html

if we’re not sure what file systems we have mounted, we can check with df -T

$ df -T | awk '{print $2}' | sort | uniq

Type

ext4

tmpfs

vfat

here we got df to output only the filetype of each partition. we then used a little awk to strip away the clutter and piped the results through uniq to give us a nice list of all the file systems we have.

or maybe we want to get the results for every partition except tmpfs. we can do that with --exclude-type=<filesystem>

$ df -h --exclude-type=tmpfs --exclude-type=vfat

Filesystem Size Used Avail Use% Mounted on

/dev/root 24G 22G 1.2G 96% /

/dev/xvdf 98G 66G 28G 71% /var/backups

/dev/xvdg 59G 5.4G 51G 10% /var/www/html

we can pass as many --exclude-type arguments as we want. here, for instance, we’re excluding both tmpfs and vfat.

if we want to get data about only one partition, we can pass a path to a file or directory as an argument, and df will only report on the partition where that file or directory resides. this is great stuff if, like me, you can’t be bothered to figure out what partition the directory you can’t write to lives on.

$ df -h /path/to/dir/or/file

Filesystem Size Used Avail Use% Mounted on

/dev/xvdg 59G 5.4G 51G 10% /path/to

what we really care about is percentage use

if we’re writing some sort of home-rolled disk-monitoring script, probably the thing we’re most worried about is the percentage used. anything north of 90 and maybe it’s time to freak out.

we can tell df to just give us the percentage used by passing the --output argument with pcent like so:

$ df -h --output=pcent /var/www/html

Use%

10%

and if we want that as a nice integer that we can use in an if statement, we can apply a little awk and sed.

$ df -h --output=pcent /var/www/html | awk END'{print $0}' | sed -e 's/%\| //g'

10

the --output argument takes a bunch of possible values, the most-useful ones being:

availthe amount of available spaceusedthe amount of used spacesourcethe name of the partition, ie./dev/sdbtargetthe mount point of the partition, ie./var/www/html

maybe we also want a nice digest of our disk space usage that we can put in our motd output, so we see it every time we log in. for that we can combine some df arguments we’ve learned and use tail to strip off the headers.

$ df --output=target --output=pcent --type=ext4 | tail -n +2

throw that in our motd script and we’ll be greeted with

/ 79%

/var/www/html 65%

inspecting directories with du

knowing that our disk is full is great (well, not “great”, per se), but if we’re going to fix it, we’re going to need to know where all that disk space is going. how big is our /var/log/ dir? how much cruft do we have stuffed in /tmp/? that sort of thing.

the du command shows us the disk usage of any directory and the files in it. that’s why it’s called du; it’s about disk usage.

$ du /path/to/directory/

running du without any arguments outputs an unholy mess: the size of every single file in every single directory in the target path. if you have a lot of files, this can take a coffee-making length of time. not super useful or efficient.

we can limit that output with the -csh switches:

$ du -csh /path/to/dir/

433M /path/to/dir/

433M total

let’s look at those switches:

-coutput only the total disk usage of the directory.-soutput only the ‘summary’ for the target directory, not the size of every single thing in it.-hshow the results in a ‘human readable’ format. we want to see gigs and megs, not blocks.

as an alternative to -h, we can use -k to get our output in kilobytes without the unit displayed. this is great stuff if we’re writing a script that needs a number we can do math with.

if we want our du -csh call to just output the total number and unit without any of the explanatory cruft, we can apply a little awk to clean it up.

$ du -csh /path/to/dir/ | awk 'END{print $1}'

433M

of course, that’s a hassle to remember (and type!) every time we want to know how much space our mp3 collection is taking up. if we’re using the bash shell, we can pack that line into a nice function:

$ dtotal() { du -csh $1 | awk 'END{print $1}'; }

and use it like so:

dtotal /path/to/dir

433M

finding disk hogs with find

finding the directories that are eating up our disk space only gets us so far. we’re going to want to find specific files that are candidates for deletion. we can do that with find.

usually, we use the find command to search for files by name, but we can also use the --size argument to search for files that are large:

$ find /path/to/directory/ -size +1G

/path/to/directory/very-large-tarball.tar

here, we’re searching for every file in a directory that has a size greater than one gig. if we want to search by megs instead, we could use something like -size +500M, and if, for some reason, we’re looking for smaller files, we can use a minus sign where the plus sign goes to get files smaller than the stated size.

filtering find by file age

if our disk space problem is a new thing, we’re going to want to find large files that were created or written to recently. we’ve all known devs who’ve done a print_r() of a large object to a file in a loop and let it run for weeks. we can find that file by narrowing our find down to files that have been modified in the last day.

$ find /path/to/directory/ -size +1G -mtime -1

/path/to/directory/very-large-and-recent-tarball.tar

the -mtime argument here means ‘modified time’ and the unit of -1 is days. by passing -mtime -1 to find we’re saying we want files larger than one gig that were modified less than one day ago.

of course we can use any number of days we wish, or pass something like -mtime +7 to find files that were modified more than seven days ago. great for ferreting out those old backup files we totally forgot about.

co-founder of fruitbat studios. cli-first linux snob, metric evangelist, unrepentant longhair. all the music i like is objectively horrible. he/him.